|

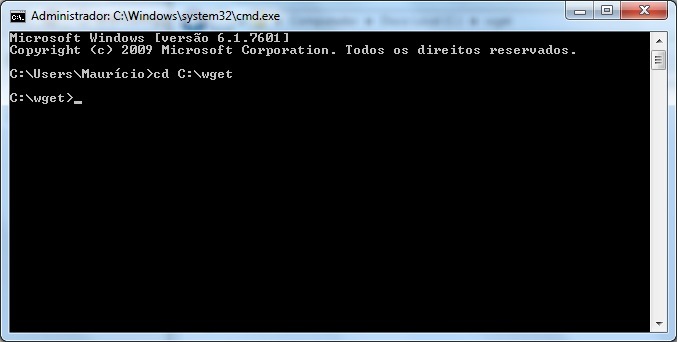

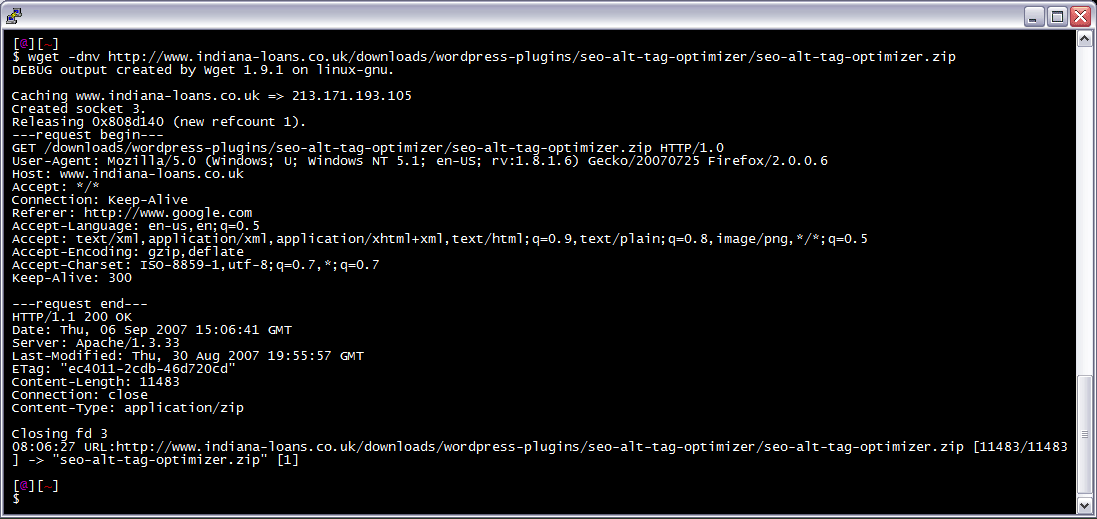

Links in downloaded HTML pages can be adjusted to point to locally downloaded material for offline. If you ever need to download an entire Web site, perhaps for off-line viewing, wget can do the job—for example: $ wget \ --recursive \ --no-clobber. Save a single web page (with background images) with. Wget retrieves and parses the html or css. Download sed-4.2.1-bin.zip (317.9 kB) Home Name Modified Size Downloads / Week Status. How do I get wget to download link from html page? I have wget installed along with a firefox plugin to get the wget code to download a page. I have added some options as well but wget only downloads the source page and when I look at the source page it has the following strange characters. Here is the command I am using. H - l. 1 - -tries=inf - -retry- connrefused - r - -convert- links - -append- output=C: \legalassetlog.

GNU Wget is a free software package for retrieving files using HTTP, HTTPS and FTP, the most widely-used Internet protocols. It is a non-interactive commandline tool. Note that only at the end of the download can Wget know which links have been downloaded. The -k option (or --convert-link) will convert links in your web pages to relative after the download finishes, such as the man page says: After the download is. Linux and Unix wget command.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed